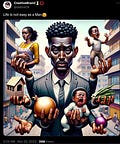

Here’s a look at four possible reasons offered by people on the internet for why the guy in the AI-generated meme below is holding three onions. I don’t know if any of them are right, but each offers a clue into the way AI-generated content is entering into the internet’s bloodstream, and the ways people are evaluating it, explaining it, and coming to understand its possible consequences.

Pop Psychology SEO Gunk

Algorithmic Racism

AI Models Don’t Understand Nuance

He Asked It To Make Onions

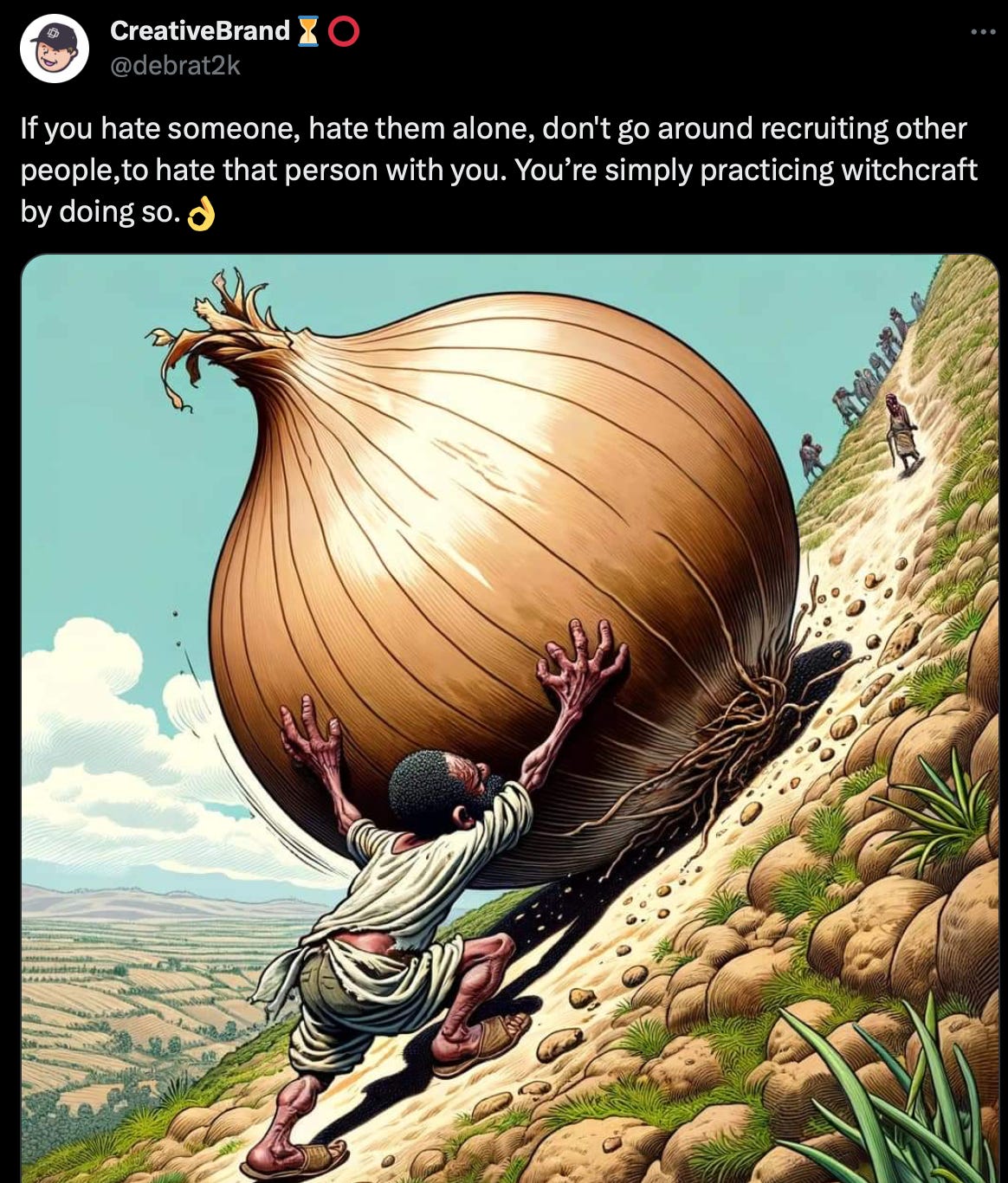

Onion Man is a relatively minor meme from November of 2023 (Know Your Meme entry here ). It might be more accurate to say it was a “discourse”: the image was widely quote-posted, discussed, and joked about on Twitter, mostly by people saying AI art was ridiculous. Viewers noticed the extra fingers and missing thumbs on this sad-eyed man’s many hands — but most of all they noticed his onions.

If the image is a representation of a modern man juggling many responsibilities — house, children, pregnant wife — then why are three of those responsibilities different kinds of onions?

The account behind the Onion Man tweet, CreativeBrand, usually posts several times a day and most of those posts don’t get much engagement. CreativeBrand appears to be owned by a Nigerian graphic designer running a business through WhatsApp. Posts include both advertisements for their services as well as random stuff. In the past year, there’s been a lot of AI art posted by the account, most of it representing families — so the Onion Man post is not atypical for the account.

What is atypical is the performance of the Onion Man post — it’s an absolute banger. From this screenshot CreativeBrand posted, you can see it did numbers and had 86,204 “Detail expands” — enough people didn’t just like this post, but wanted to learn more about it, zoom in closer, touch the photo.

What made the post viral more than anything else, were the debates in the comments and the jokes in the quote-posts about why the AI generated three onions.

Hypothesis One: Pop Psychology SEO Gunk

People cycled through many possible explanations for the presence of the onions. One offered by a commenter on the original post took the form of this screenshot with some kind of Google answer about “onion people” versus “garlic people.”

The words come from a 2019 blogpost by North Carolina psychologist Mark Ledford. It’s not AI, but reads like it. This is SEO gunk, what Google’s algorithm is built to pick up on. Repetitive wording, simple statements, utter flatness.

This reply on the Onion Man post is one of the most popular, and it shows a typical reaction to AI content: people immediately Google it. They see a deepfake and they go to their internet browser to check what the actual facts are, or they see a stray onion and ask Google what it means. But Google is not a person, it’s more like asking another AI.

If the first line of defense for deepfakes or AI generated content is Googling to check if it’s real, then we’re in trouble, because Search has deteriorated as a tool (this inspired the 2023 practice of everybody Googling something and then putting “reddit” on the end so they’d get a Reddit discussion thread instead of SEO gunk).

Gunk is a real threat to society. Articles about AI poisoning itself are deeply interesting, as is dead internet theory. Proponents of this school of thought argue that most content on the web is already produced and distributed by bots and not people. Since all the algorithms and AIs are fed by this bot content, we’re getting sucked into an ouroboros of AI training itself on the outputs of other AIs, so what you encounter online is increasingly unmoored from what real humans find relevant.

This is a point that’s been raised in recent conversations about the death spiral of American media: if we don’t have content made by professionals anymore, then what we’re stuck with are these algorithms. And if it’s only the algorithms, the answers we can find to explain why AI does something will be thoughtless, irrelevant, and off-topic, like “there are onion people and there are garlic people” from a blog post some rando wrote four years ago.

Hypothesis Two: Algorithmic Racism

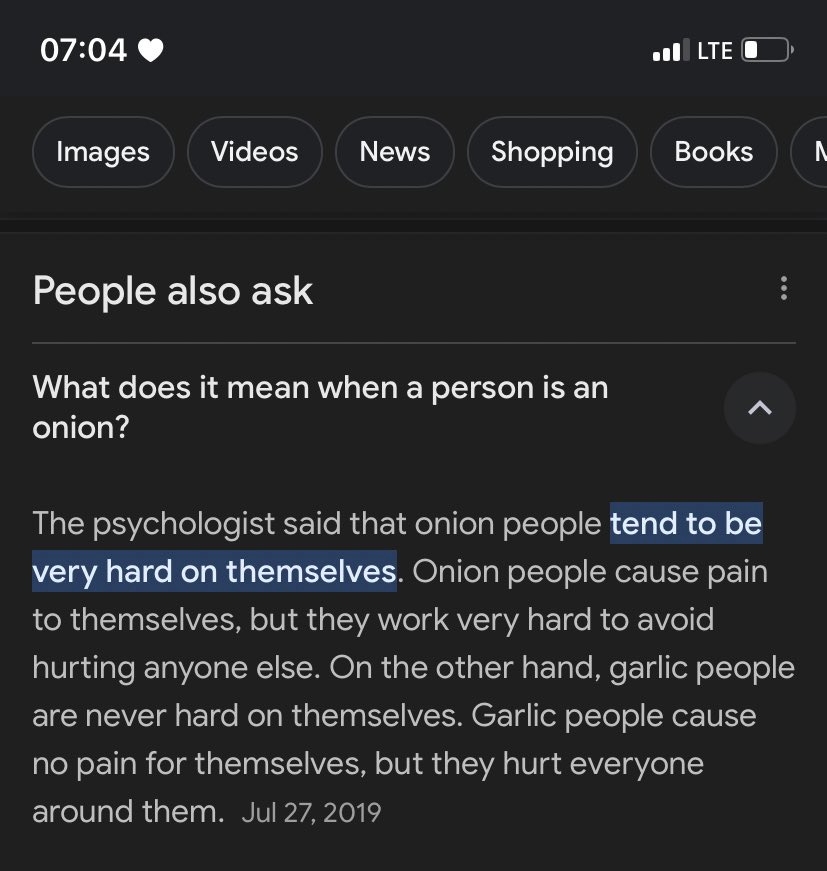

The Onion Man picture was reposted to the subreddit /r/BlackPeopleTwitter, where it received over 4,400 upvotes and almost 200 comments in two months.

While not on Twitter, the subreddit (which has nearly 6 million members) is part of Black Twitter more broadly, one of the most influential schools/scenes for memes — and it’s a frequent launching-pad for memes and trends to go viral. There was a lively discussion in the comments of the post, which was titled “Just Put The Onions Down.” People talked about their own experiences juggling family and work, about graphic design as an industry, about artificial intelligence’s impacts on the world. But most of all they talked about the onions.

AI does pick up on stereotypes. Countless studies have shown that the images AI generates of people fit the stereotypes that a typical 32-year-old Californian tech bro white man would hold. If you ask AI to generate a Mexican person, they tend to be wearing a sombrero. If you ask it to generate a “person,” that person will probably be white.

Safiya Noble’s Algorithms of Oppression records the way in which Google’s algorithm specifically perpetuates racism. AIs, like algorithms, are trained on media made by humans and on actions taken by human users. The AI comes to reflect the biases and of people and media. As algorithms go on to shape the very human behavior they’re learning from, they reinforce those tendencies.

The users on /r/BlackPeopleTwitter kept discussing:

Thinking of the “training images” is crucial here. AIs generate based on following patterns identified in the media they intake. If this AI were looking at a bunch of National Geographic photos of Africans and produce, the AI might be led (because it is, at its heart, a sophisticated kind of autocomplete) to suppose that if it sees an African person it’s likely to also see produce. This is a machine built to generalize, which does not reflect objective reality, but rather the version of reality projected by the data it feeds on and the people behind that data.

Hypothesis Three: AI Models Don’t Understand Nuance

To the AI, tears are tears whether they are caused by a vegetable or by an emotional state. It may have scanned through tons of references to how onions make people cry in its training data — because that’s one of the main things onions are famous for.

AI “thinks” and renders rather rigidly. What it makes is more like a Lego castle than a painting. It stacks inferences on top of each other in new forms and shapes, recombining a discrete set of components in creative ways.

An artist might look at the sky and make an inference like an AI model would: “given that the light is coming from the west, I’ll need a bit more white to capture the color over that pine tree.” But once the paints are mixed, they can’t be separated into their discrete parts. And paint relies on the motion of the human hand and the judgment of the human eye in the moment of application to be used interestingly. All an AI model does is make that set of inferences “if x then y, if y the z…” but it does not exercise judgment.

So the mistakes AI makes — rendering an extra finger, rendering three onions — are mistakes a human would not make. A poor artist would, perhaps, draw things out of proportion or with insufficient detail — these would be errors of skill or diligence. But where AI messes up is on a different level, because it’s not thinking. It’s wrong the way that a lawnmower or a clock is wrong, not the way a person is wrong. What’s weird is that we’re not used to seeing pieces of art be wrong in this way.

Hypothesis Four: He Asked It To Make Onions

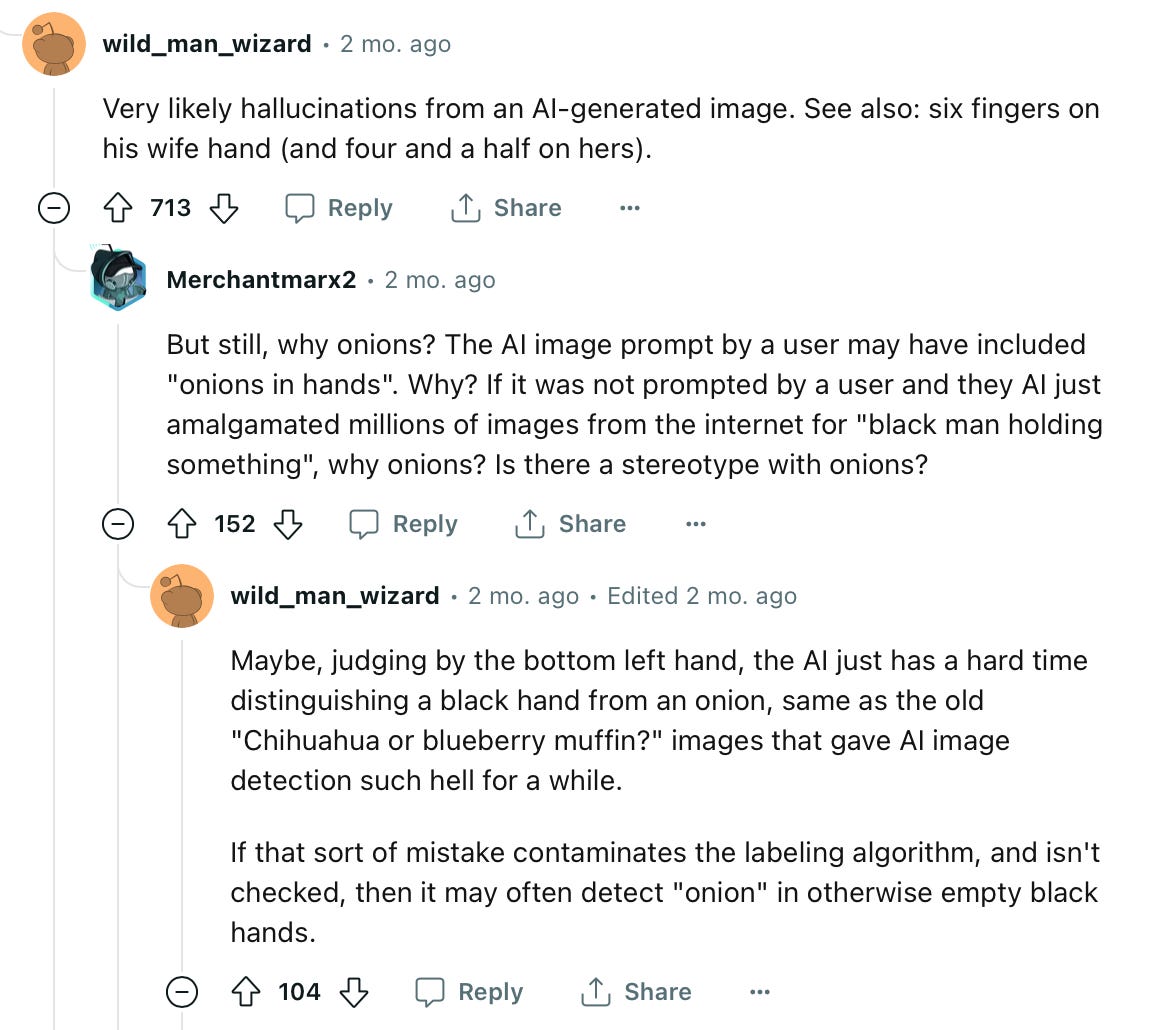

Some eagle-eyed posters looked through CreativeBrand’s past content and noticed these aren’t the first onions he’s made with AI.

This Sisyphean picture of rolling an onion up a hill goes hard, and it precedes the more famous Onion Man.

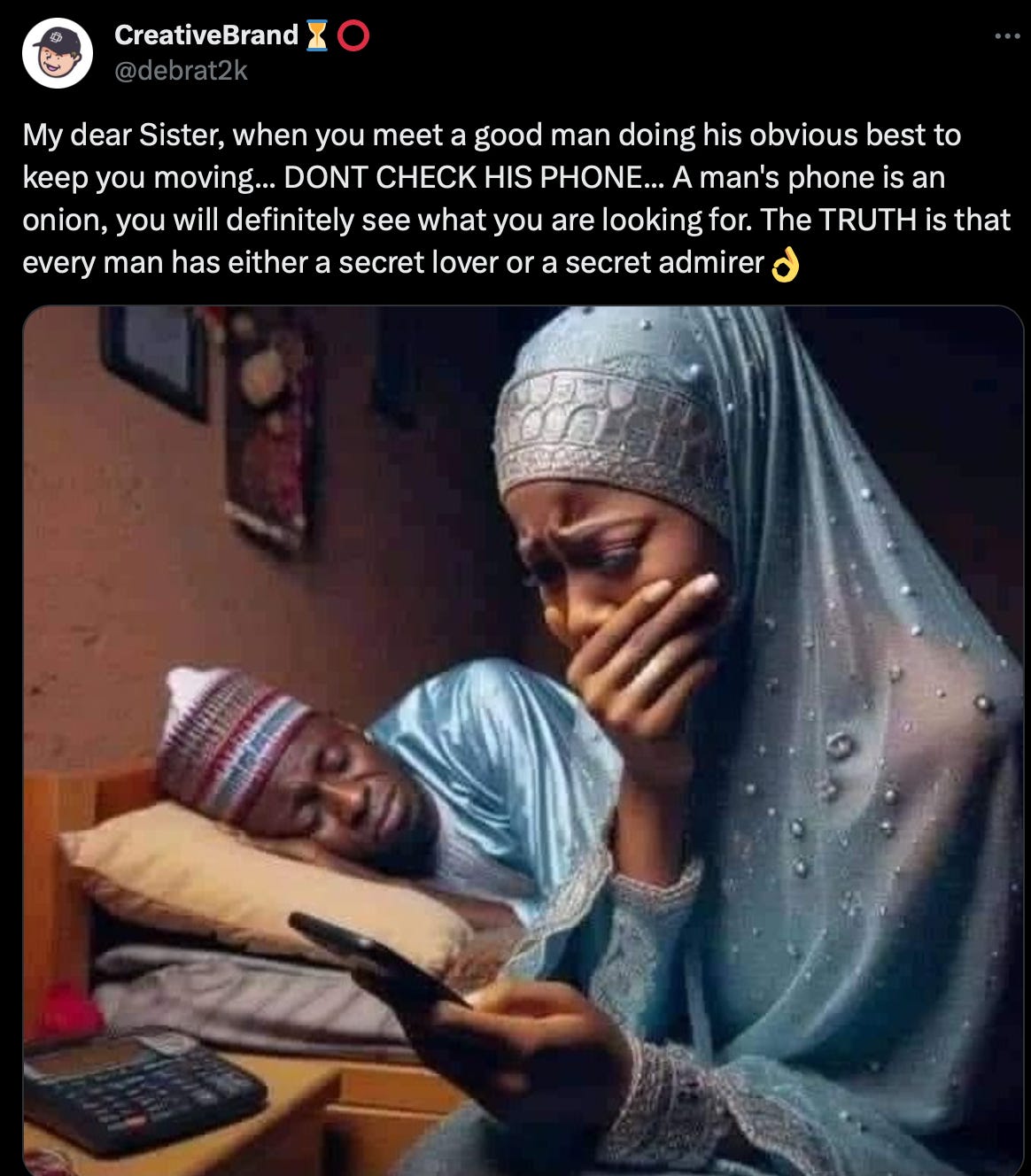

“A man’s phone is an onion.” What does that mean? At this point, I began to wonder if CreativeBrand themself was an AI. This is what happens, perhaps, when you spend too much time looking at AI.

Then (perhaps starting to overthink) I wondered if CreativeBrand (as a human) is making a kind of meta-gesture here. This phone post, after all, is from two months after the viral onion man photo. Maybe an “onion” is just any object where “you will definitely see what you are looking for” — that whatever you want to think about AI, the mysterious onions will give you evidence for it. A phone is an onion because if you look at your man’s phone with suspicion, you will find something that confirms your suspicion… and maybe you’d get a different result if you looked at the massive calculator on his bedside table.

So maybe CreativeBrand purposefully prompted the AI to render onions as part of some obscure, Magritte-esque conceptual play? Or maybe he knew the onions would confuse people, spark discussion, and lead to virality.

There’s always an element of human incoherence in art, and that’s part of what makes it beautiful. AI image generators, as a kind of tool, can maybe carry this sort of eccentric creativity just as well as anything else can.

AI Memes are serious business

For many of us, our most substantial engagement with AI-generated content is through memes. Viral memes are anchors on which journalistic coverage and public understandings are moored. Through memes, audiences learn what AI technologies can do and debate about their implications. AI memes are also, like any meme, a cultural text that carries meaning — and they’re a great record of evolving public understandings of AI, if effort is made to read them that way.

It’s important to watch the human end of the AI question as closely as we watch the technical or political ends. What will really determine the future of AI is not what tech companies innovate or regulators say, but how everyday people as citizens, users, and creators interact with the technologies. Memes are a piece of figuring out that puzzle.

Once again, Walker takes his analytical scalpel to the onion of the Internet.

I wish I could see the bigger picture the way he does, but I'm just a lowly garlic person.